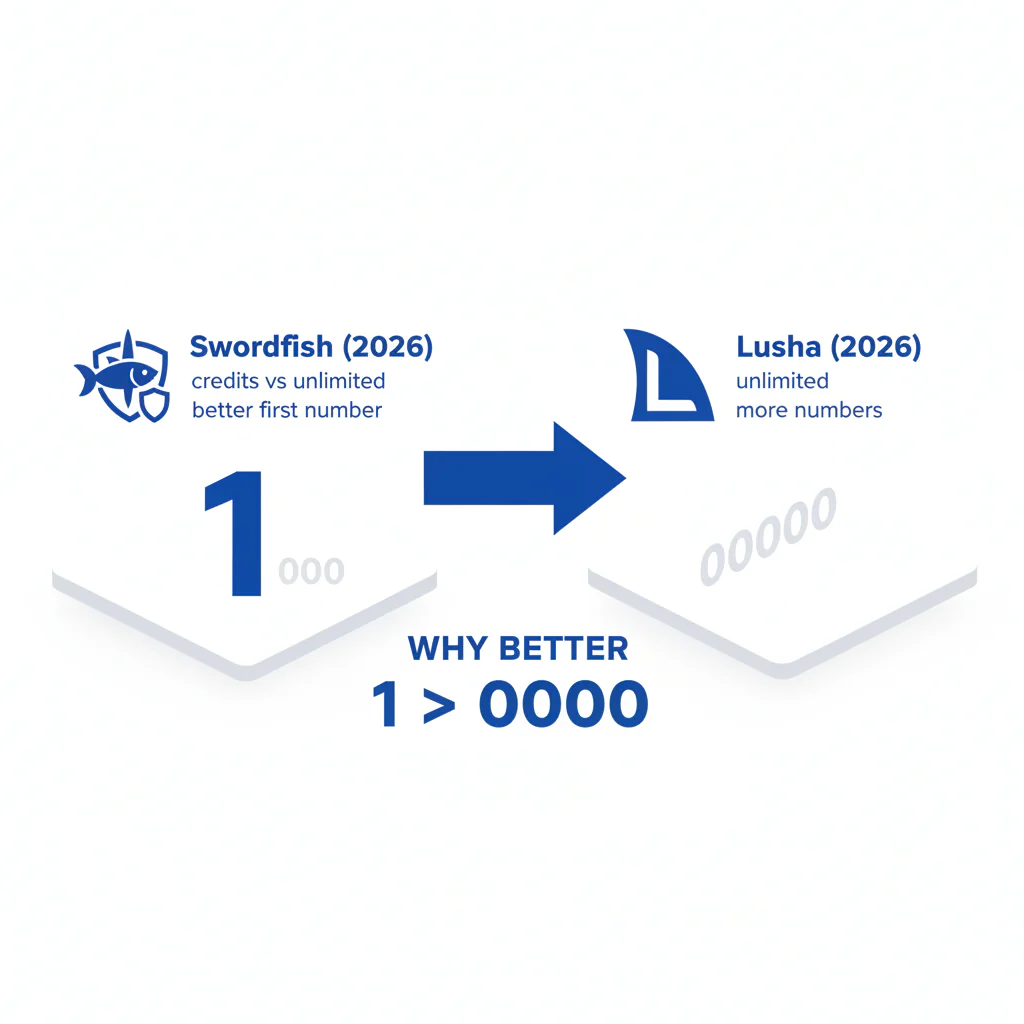

Swordfish vs Lusha (2026): credits vs unlimited, and why better first number beats more numbers

Byline: Ben Argeband, Founder & CEO of Swordfish.AI

Who this is for

Buyers comparing “unlimited” claims who want predictable usage and fewer surprises. You’re trying to avoid the usual failure modes: credit burn you can’t forecast, data decay that quietly lowers connect rate, and integrations that turn your CRM into a conflict zone.

Quick verdict

- Core answer

- In swordfish vs lusha, the operational difference is credits vs unlimited. Lusha commonly runs on a credit-governed pricing model (often discussed as Lusha credits). Swordfish is designed around fair-use unlimited workflows where the buyer question is “what happens at the limit?” not “how many credits are left?”

- Key stat

- Connect rate. If the first dial doesn’t reach the person, you pay in retries, rep time, and CRM noise. That’s why better first number matters more than “more numbers returned.”

- Ideal user

- Teams doing high-volume sales prospecting or recruiter contact data who need consistent mobile coverage, explicit verification signals, and pricing that doesn’t change meaning when seat count or API usage grows.

Choose Swordfish if credit ceilings or unclear enforcement slow reps down and you need predictable day-to-day usage. Choose Lusha if your usage is low, forecastable, and you can model credit burn across extension, export, and API before rollout.

This page uses a MYTH_BUST framework because contact data vendors tend to demo the same illusions: big “match rates,” lots of numbers per person, and “unlimited” that turns into throttling once you’re dependent.

Checklist: Feature Gap Table

| Buying concern (what breaks in production) | Swordfish (what to verify) | Lusha (what to verify) | Hidden cost if you ignore it |

|---|---|---|---|

| Pricing model clarity under scale (seats + usage) | Get the fair-use definition in writing: what triggers throttling, review, or restrictions, and whether API usage is treated differently. | Get the credit rules in writing: what consumes credits (extension, export, enrichment, API) and what happens when credits run out. | Budget variance and mid-quarter slowdowns when usage spikes (new SDR class, recruiting surge, campaign pushes). |

| Credits vs unlimited workflow friction | Confirm normal prospecting can run without counting per-contact consumption and that enforcement is predictable. | Confirm whether “getting a mobile” costs more than “getting any phone,” and whether team sharing/export changes burn. | Reps ration enrichment, coverage drops, and pipeline math gets unreliable. |

| Mobile coverage on your ICP (not a demo list) | Test mobile availability on your own sample by industry and geography, then check which number reps dial first. | Run the same test and compare outcomes, not just presence of a number. | More dials per meeting and higher spam-label risk when reps retry dead numbers. |

| Verification you can automate | Confirm verification metadata is visible and exportable so you can route higher-confidence numbers to the dialer first. | Confirm whether verification is explicit and exportable, or mostly implied in the UI. | Integration headaches: no routing rules, reps guess, and your CRM fills with low-confidence fields. |

| Direct dial quality vs “more numbers returned” | Audit first-dial outcomes. Treat extra numbers as fallback only if they improve connects. | Audit the same. Don’t score “we returned 3 numbers” as success if none connect. | Time tax: retries, voicemail loops, and sequences that stall because the first number is wrong. |

| Extension workflow (rep adoption) | Validate the browser workflow and how it fits LinkedIn and CRM tabs; see Swordfish extension. | Validate extension behavior and identify which actions consume credits. | Adoption drop: if reps feel every lookup is “spend,” usage collapses and data coverage decays. |

| CRM overwrite and dedupe rules | Confirm you can avoid overwriting a good number with a weaker one and can control enrichment scope. | Confirm overwrite controls and how exports/enrichment interact with existing fields. | CRM pollution: conflicting phones, duplicate contacts, and reporting that stops matching reality. |

What Swordfish does differently

1) Prioritized direct dials built around better first number

Most teams don’t fail because they lack “more numbers.” They fail because the first number reps dial doesn’t connect. Swordfish is designed to prioritize the best dial first, because better first number reduces retries and improves connect rate without forcing reps to guess which field is real.

2) Mobile coverage with verification signals you can route on

Mobile coverage only matters if it holds on your ICP and if you can act on confidence. Swordfish emphasizes verification signals so RevOps can route higher-confidence numbers to the dialer first and treat lower-confidence numbers as fallback. That reduces wasted dials and reduces CRM churn from “try everything” behavior.

3) Fair-use unlimited that you can audit

“Unlimited” is a contract word, not an operational guarantee. Swordfish is built around fair-use unlimited workflows, which means you should ask one question before you argue about price: what happens at the limit. If you’re trying to compare “unlimited” claims across vendors, use unlimited contact credits to audit enforcement mechanics so you don’t discover the real rules after rollout.

Get the enforcement mechanics in writing: whether throughput slows, whether exports are restricted, and whether API usage is treated differently than extension usage. If a vendor can’t explain enforcement, you’re buying a future incident ticket.

4) Extension-first workflow for repeated use

If your reps live in LinkedIn and your CRM, the extension is the product. If the extension feels like a meter running, adoption drops and your coverage decays. Swordfish’s workflow is designed for repeated use in the browser; see Swordfish extension.

Decision guide

Most “vs” decisions go wrong because buyers test the wrong thing. They test “records returned” instead of outcomes, then act surprised when connect rate doesn’t move.

MYTH_BUST

Myth: More numbers returned means better coverage. Reality: If the first number doesn’t connect, extra numbers usually add retries and conflicting CRM fields.

Myth: “Unlimited” means no limits. Reality: It means limits are enforced differently; you need the enforcement rules in writing.

Myth: A vendor’s demo list predicts your results. Reality: Your variance drivers are seat count, API usage, list quality, and industry/geography.

Variance explainer (why your results will differ):

- Seat count: More seats means more parallel usage. Credit systems can look fine in a small pilot and become a daily constraint once the team scales.

- API usage: If you enrich inbound leads or run scheduled jobs, you’ll find out whether “unlimited” excludes API or whether credits drain faster than expected.

- List quality: Clean lists make every vendor look good. Messy lists expose matching and verification weaknesses and accelerate CRM pollution.

- Industry/geography: mobile coverage varies by region and role. Recruiting lists decay faster because job changes break contactability.

Integration failure points to audit before you sign:

- Dedupe: If enrichment creates duplicates, your reps call the same person twice and your reporting lies.

- Overwrite precedence: If a weaker number overwrites a good one, connect rate drops and nobody notices until pipeline slips.

- Field-level confidence routing: If verification can’t be used in rules, reps guess and your CRM becomes a dumping ground.

If your workflow depends on Lusha credits, model burn across extension, export, and API or expect reps to ration enrichment and your connect rate to drift.

If you want a baseline for evaluating datasets without vendor theater, use data quality to structure your test around decay, verification, and outcomes.

How to test with your own list (7 steps)

Keep outreach conditions identical across both halves (same channel, same timing) so you’re testing data, not process.

- Pull a representative sample from your CRM: mix of recent and older records, and your real industry/geography distribution.

- Freeze the sample so both tools are tested on the same inputs and you can rerun later to observe data decay in your ICP.

- Define success upfront: connect rate for calls, bounce/invalid for email, and “time-to-first-connect” (how many attempts before a real conversation) as an internal ops metric.

- Split the list into two equal halves and run the same workflow (extension lookups, exports, and API enrichment if you use it).

- Record first-dial outcomes to test better first number. Don’t reward “more numbers returned” unless it improves connects.

- Audit enforcement behavior: note what actions consume credits (if applicable) and whether any throttling/restrictions appear under realistic throughput.

- Check CRM impact: duplicates created, overwrites, and whether verification metadata survives into the fields your reps actually use.

Decision Tree: Weighted Checklist

| Criterion (standard failure point) | Weight (why it’s weighted) | What to ask / verify | Business outcome |

|---|---|---|---|

| Pricing transparency in the pricing model | Highest (hidden enforcement drives surprise spend and workflow slowdowns) | Get written rules for credits or fair use, including what changes with seat count and API usage. | Predictable spend and fewer mid-quarter interruptions. |

| Credits vs unlimited fit for your workflow | Highest (workflow friction compounds daily) | Map your actions: lookups, exports, enrichment, API calls. Identify what consumes credits and what doesn’t. | Higher adoption and consistent coverage across the team. |

| Mobile number accuracy and direct dial quality | High (connect rate is the outcome you’re buying) | Run a first-dial test on your ICP sample and compare connects, not “numbers returned.” | More conversations per rep-hour and fewer retries. |

| Verification metadata availability | High (automation depends on machine-usable signals) | Confirm verification flags are visible, exportable, and usable in CRM/dialer routing rules. | Less wasted dialing and cleaner CRM fields. |

| Integration overhead (CRM + dialer + enrichment rules) | Medium (implementation cost shows up after procurement) | Ask how dedupe, overwrite precedence, and enrichment scope are handled. | Less CRM pollution and fewer RevOps fire drills. |

| Extension usability (rep adoption) | Medium (adoption is binary) | Have a small rep group run the same workflow for a week and report friction points. | Higher usage consistency and better coverage. |

| Support response and auditability | Lower (matters after the above are solved) | Ask what logs/exports you can get for troubleshooting and how escalations work. | Shorter time-to-fix when something breaks. |

Troubleshooting Table: Conditional Decision Tree

- If you run high-volume outbound or recruiting and you routinely hit usage ceilings, then prioritize fair-use unlimited workflows with written enforcement rules. Stop condition: if the vendor cannot explain in writing what happens at the limit (throttle, review, restriction), do not buy.

- If finance needs predictable spend and you can forecast usage tightly, then a credit-based pricing model can work if credit burn is consistent across extension, export, and API. Stop condition: if credit consumption differs across surfaces and you can’t model it, expect budget variance.

- If your pain is low connect rate, then run a first-dial test and optimize for better first number. Stop condition: if first-dial connects don’t improve, extra numbers are noise.

- If you need automation (routing, sequencing, enrichment rules), then require explicit verification metadata in exports/API. Stop condition: if verification is not machine-usable, your team will guess and your CRM will drift.

Limitations and edge cases

Fair use still has boundaries. The risk is not that limits exist; it’s that they’re vague. Mitigation is to get enforcement rules in writing and test with realistic throughput, including API usage if you rely on it.

Mobile coverage varies by ICP. International coverage, niche roles, and high job-change segments will show more variance. Treat any generic coverage claim as marketing until your own list test confirms it.

Verification only helps if you operationalize it. If your dialer and CRM can’t route by verification, you’ll treat all numbers equally and lose the benefit. That’s an integration decision, not a data decision.

Credit systems can be acceptable for low-volume teams. The failure mode is growth: more seats, more automation, and suddenly the tool becomes a daily constraint instead of a utility.

Evidence and trust notes

I’m biased: I’m the Founder & CEO of Swordfish.AI. This page is written like an audit because that’s how contact data tools succeed or fail: enforcement, decay, and integration behavior under real usage.

Competitor/source discipline: this page does not claim universal accuracy, universal coverage, or specific competitor plan details. Results vary by seat count, API usage, list quality, and industry/geography.

What you should request from any vendor (including us) before signing:

- Written enforcement language: for credits or fair use, including what happens at the limit and whether API usage is treated differently.

- Verification field definitions: what each verification signal means and whether it is exportable and available via API.

- Export/API parity clarification: confirm whether the same data and metadata are available across extension, export, and API, and what triggers restrictions.

- Privacy and compliance paperwork: request the DPA, opt-out handling documentation, and a clear escalation path for data disputes.

If you’re trying to understand how credit-based purchasing typically behaves in practice, start with lusha pricing and map it to your actual workflows.

FAQs

Is Swordfish better than Lusha?

It depends on what you’re optimizing for. If your risk is credit burn and unpredictable enforcement, Swordfish’s fair-use unlimited approach is usually easier to operate. If your usage is low and forecastable, a credit-based model can be workable if you can model consumption across extension, export, and API.

What does credits vs unlimited mean in practice?

Credits mean each action consumes a unit you can run out of, which changes rep behavior. Fair-use unlimited means you should be able to run normal workflows without counting, but you still need written enforcement rules so you don’t discover throttling after rollout.

Why is better first number more important than more numbers returned?

Because reps dial in order. If the first number doesn’t connect, you pay in retries, time, and CRM clutter. Extra numbers only help if they improve first-dial connects or provide a reliable fallback with clear confidence ordering.

How should I evaluate mobile number accuracy?

Use your own ICP sample and measure outcomes you already track: connects, wrong numbers, and invalid/bounce signals. Don’t accept "we returned a mobile" as success if it doesn’t improve connect rate.

Does Lusha use credits?

Lusha commonly uses credits in its pricing model. The operational question is how credits are consumed across extension usage, exports, and API/enrichment. For details and what to watch for, see lusha pricing.

Where can I see a deeper review of Lusha?

Use lusha review for a workflow-focused breakdown, then compare against your own pilot results.

What if I’m comparing multiple vendors, not just these two?

Start with lusha alternatives, then run the same "own list" test so you’re comparing outcomes, not demos.

Next steps

- Day 0–1: Pull your ICP sample from CRM, define success (connect rate, bounces/invalids), and document your workflow (extension, export, API).

- Day 2–4: Run the split-list pilot and capture first-dial outcomes to test better first number.

- Day 5–7: Audit enforcement behavior under realistic throughput and document credit burn or fair-use restrictions.

- Week 2: Implement with guardrails: dedupe rules, overwrite precedence, and verification routing so your CRM doesn’t degrade.

If your team works in the browser, start by validating the workflow in the Swordfish extension.

About the Author

Ben Argeband is the Founder and CEO of Swordfish.ai and Heartbeat.ai. With deep expertise in data and SaaS, he has built two successful platforms trusted by over 50,000 sales and recruitment professionals. Ben’s mission is to help teams find direct contact information for hard-to-reach professionals and decision-makers, providing the shortest route to their next win. Connect with Ben on LinkedIn.

View Products

View Products